BabyLM Challenge

Summary: This shared task challenges community members to train a language model

from scratch on the same amount of linguistic data available to a child. Submissions should be implemented in Huggingface's Transformers library and will be evaluated on a shared pipeline. This shared task is co-sponsored by

CMCL and

CoNLL.

• Models and results due

July 15, 2023 July 22, 2023, 23:59 anywhere on earth (UTC-12). Submit on

dynabench.

• Paper submission due

August 1, 2023 August 2, 2023, 23:59 anywhere on earth (UTC-12). Submit on

OpenReview.

See the

guidelines for an overview of submission tracks and pretraining data. See the

call for papers for a detailed description of the task setup and data.

Consider

joining the BabyLM Slack if you have any questions for the organizers or want to connect with other participants!

Overview

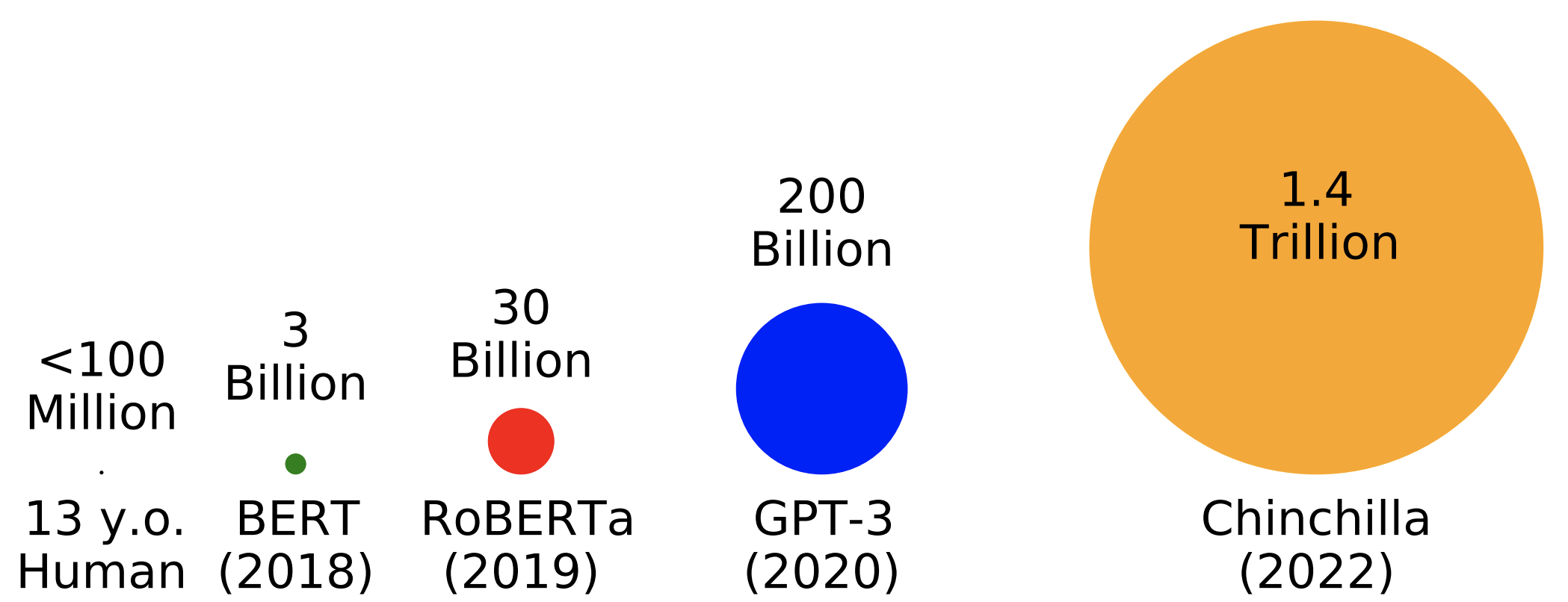

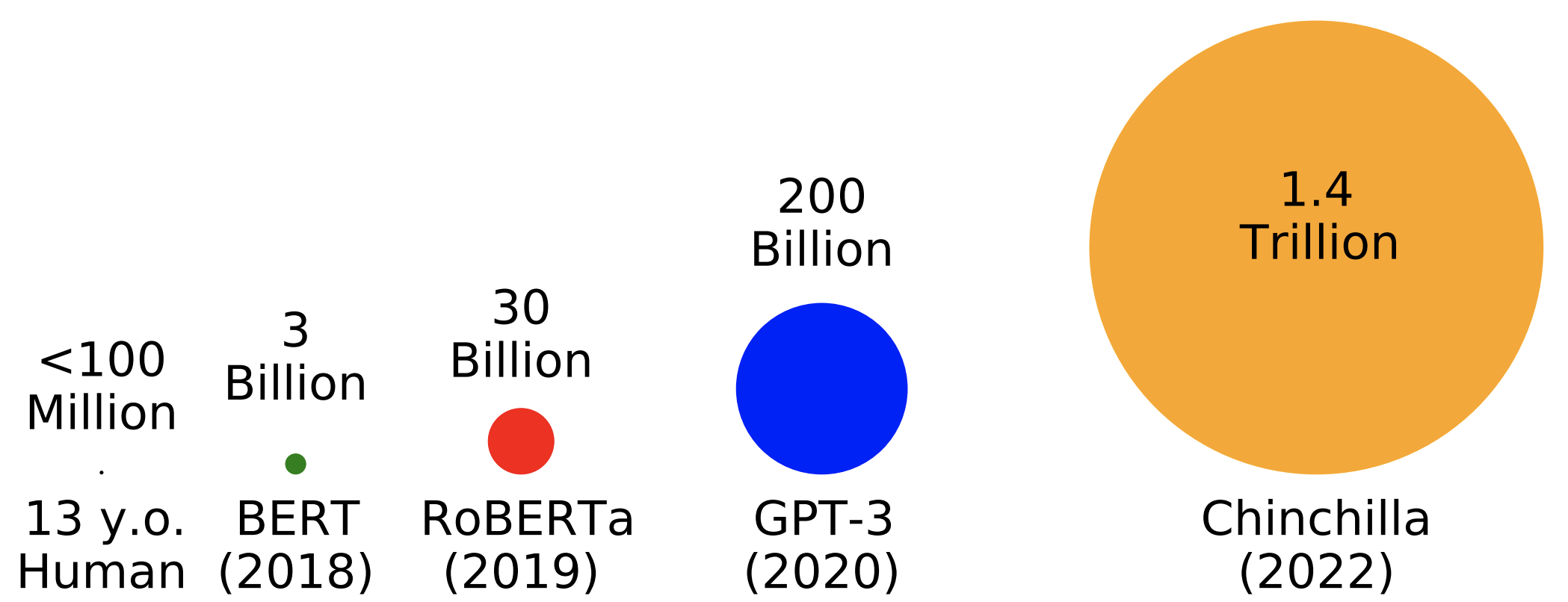

Huge effort has been put into optimizing LM pretraining at massive scales in the last several years. While growing parameter counts often get the most attention, datasets have also grown by orders of magnitude. For example,

Chinchilla sees 1.4

trillion words during training---well over 10000 words for every one word a 13 year old child has heard in their entire life.

The goal of this shared task is to incentivize researchers with an interest in pretraining or cognitive modeling to focus their efforts on optimizing pretraining given data limitations inspired by human development.

Additionally, we hope to democratize research on pretraining—which is typically thought to be practical only for large industry groups—by drawing attention to open problems that can be addressed on a university budget.

Why <100 Million Words?

Focusing on scaled down pretraining has several potential benefits:

First, small scale pretraining can be a sandbox for developing novel techniques for improving data efficiency. These techniques have the potential to then scale up to larger scales commonly seen in applied NLP or used to enhance current approaches to modeling low-resource languages.

Second, improving our ability to train LMs on the same kinds and quantities of data that humans learn from hopefully will give us greater access to plausible cognitive models of humans and help us understand what allows humans to acquire language so efficiently.

Organization Team

• Leshem Choshen

• Ryan Cotterell

• Kundan Krishna

• Tal Linzen

• Haokun Liu

• Aaron Mueller

• Alex Warstadt

• Ethan Wilcox

• Adina Williams

• Chengxu Zhuang

Submissions should be implimented in Huggingface's Transformers library and can be submitted along one of three submission tracks. The data has been released and can be downloaded

here.

Submission Tracks

We will evaluate models in three cateogries: strict , strict-small and loose .

• Strict and Strict-Small: The strict and strict-small tracks require that submissions are trained exclusively on a fixed dataset. The only difference between these tracks is the size of the dataset (~10M words vs. ~100M words). Winners will be determined based on performance on a shared evaluation set consisting of syntactic evaluation and NLU tasks.

• Loose: Submissions are still limited to ~100M words or less of training data, and will be tested on the shared evaluation set. However, they are permitted to use unlimited non-linguistic data. Additionally, training on additional text is allowed without limits if that text is generated by a model trained following the above restrictions. Winners will be selected holistically based on evaluation performance, relevance to the shared task goals, potential impact, and originality.

Pretraining Data

We distribute a developmentally plausible pretraining dataset inspired by the input to children. Submissions must use only this training data to be considered for the strict and strict-small tracks, but may use alternate or additional data for other tracks. The dataset has the following properties:

• Under 100M words: Children are exposed to 2M-7M words per year

(Gilkerson et al., 2017) . Choosing the beginning of adolescence (age 13) as a cutoff, children are exposed to at maximum 91M words, which we round up to 100M.

• Mostly transcribed speech: Most of the input to children is spoken; thus, our dataset focuses on transcribed speech.

• Mixed domain, consisting of the following sources: CHILDES (child-directed speech),

Subtitles (speech), BNC (speech), TED talks (speech), children's books (simple written language).

See the

call for papers for a detailed breakdown of the pretraining datasets.

Evaluation Pipeline

The public validation data we use is a mixture of BLiMP and (Super)GLUE tasks. We will hold out additional tasks for our final evaluation of submitted

models.

Results Submissions

The deadline for results submissions is July 15 July 22, 23:59 anywhere on earth (UTC-12).

Paper Submissions

The deadline for paper submissions is August 1 August 2, 23:59 anywhere on earth (UTC-12).

Submissions must be made through our

OpenReview portal.

We accept two types of paper submissions:

• Archival full papers (up to 8 pages)

• Non-archival extended abstract (up to 2 pages)

Submissions of both types are:

• given unlimited space for references,

• given unlimited space for appendices,

• given extra space for ethics/limitations, though these sections are optional

We allow dual submissions of archival papers with one of the co-sponsored workshops (CoNLL and CMCL). In the event that an archival paper is accepted to both BabyLM and one of these workshops, it can only appear in one of their proceedings (i.e., it must be withdrawn from one venue).

BabyLM will hold its own review process, separate from CoNLL and CMCL, and the proceedings will appear in their own volume. The acceptance criteria are based on soundness: We plan only to reject submissions that make incorrect or unjustified claims. Other feedback will be directed at the improvement of submissions.

Timeline

January 2023: Training data released

March 2023: Shared evaluation pipeline released

July 15, July 22, 2023: Models and results due

August 1, August 2, 2023: Paper submissions due

September 30, 2023: Reviews due

October 6, 2023: Notification of acceptance

October 20, 2023: Camera ready due

Date TBA: Shared task presented at CoNLL