BabyLM Challenge

Summary: BabyLM returns for its 4th year as both a shared task and a workshop at EMNLP 2026. This round keeps the core goal: sample-efficient pretraining under human-scale data budgets, while updating the track structure and datasets.

→ A detoxified 100M-word Strict dataset and a detoxified 10M-word Strict-Small dataset.

→ A new

MultiLingual track based on

BabyBabelLM, with evaluation focusing on

English, Dutch, and Chinese.

Track update: This year introduces a dedicated MultiLingual track, and removes Multimodal and Interaction

as standalone competition tracks, both are now subsumed into Strict / Strict-Small (paired image-text data and teacher-model feedback are allowed).

See the

guidelines for an overview of submission tracks and pretraining data. See the updated call for papers for the full task setup, track rules, and dataset details.

Consider

joining the BabyLM Slack if you have any questions for the organizers or want to connect with other participants!

Submission Links and timeline

Submissions will be accepted

via ACL Rolling Review (ARR) or

directly through OpenReview. Official OpenReview portal links will be posted on this site once they are live.

Tentative timeline:

February 25 2026: Call for papers and Training data released

April 2026: Evaluation pipeline and baselines released

May 25 2026: ARR submission deadline

July 15 2026: Direct submission deadline (Both, Challenge and Workshop)

Early August 2026: Direct submission reviews due, ARR commitment deadline

Mid August 2026: Paper decisions released

Early September 2026: Camera ready due

Oct 24-29 2026: Workshop at EMNLP in Budapest

Updated Rules for BabyLM Round 4

•

New track: MultiLingual. Participants train on a MultiLingual mixture from

BabyBabelLM. The challenge track focuses on

English, Dutch, and Chinese, and allows a custom mixture totaling

100M tokens (with word counts adjusted by each language’s Byte Premium in baseline construction).

• Track restructuring: Dedicated Multimodal and Interaction competition tracks have been removed. Instead, participants may use paired image-text data and/or leverage feedback from a teacher model during training or Strict or Strict-Small.

• Updated (detoxified) datasets: We release a modified detoxified training dataset, including: 100M word Strict, 10M word Strict-Small, and 100M word + image Multimodal.

• Compute/epochs limit remains: Competition entries may not conduct more than 10 epochs over their training data (this restriction applies to competition entries; workshop-only papers are not required to follow it).

Overview

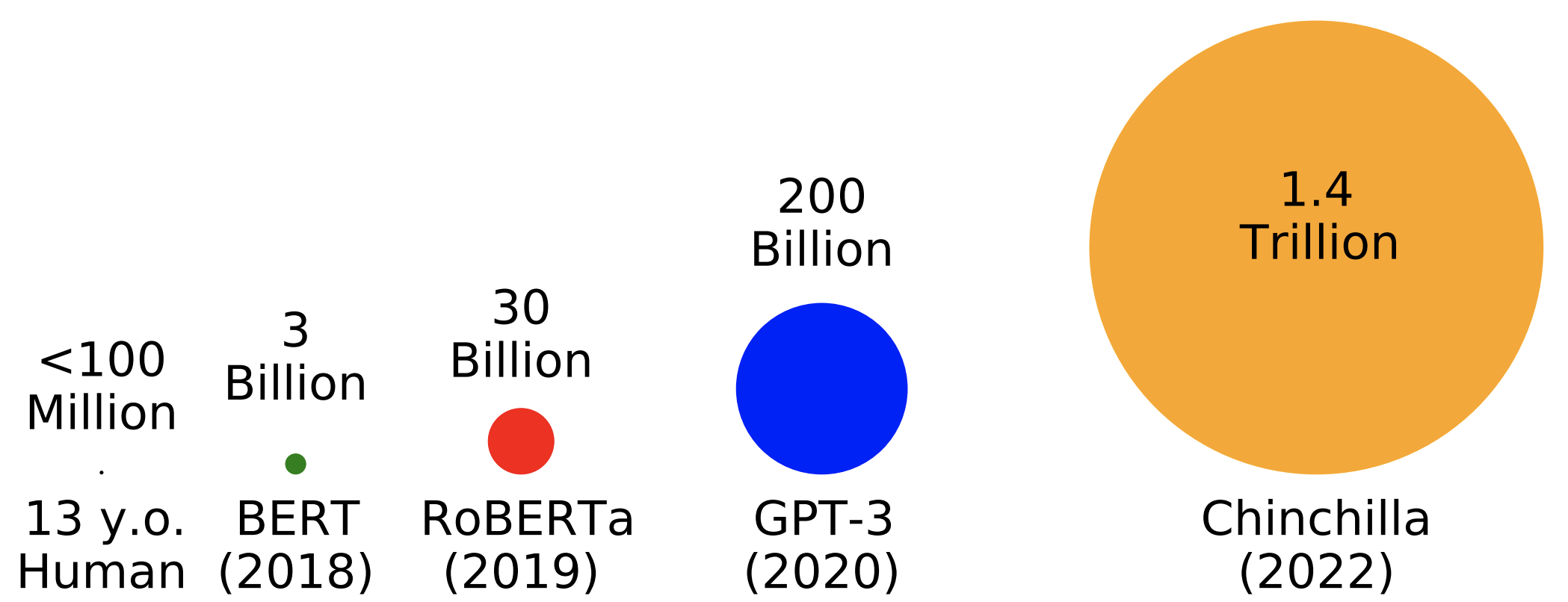

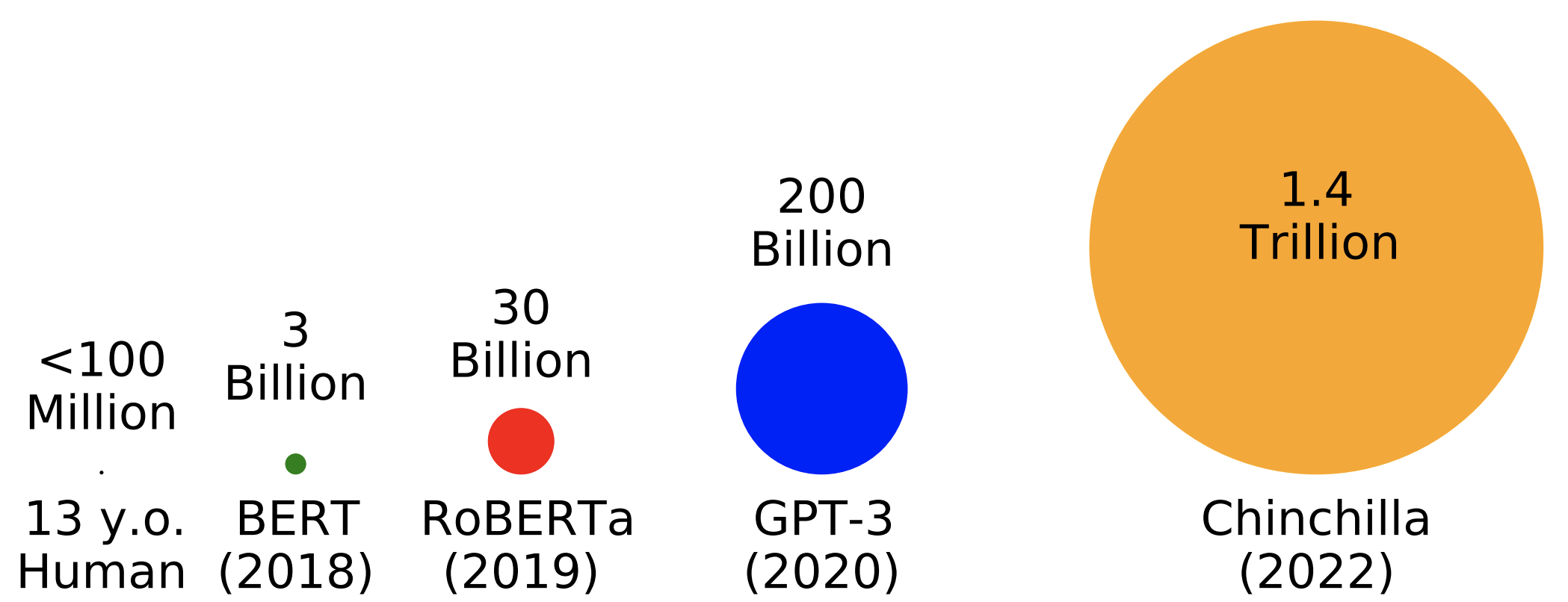

Huge effort has been put into optimizing LM pretraining at massive scales in the last several years. While growing parameter counts often get the most attention, datasets have also grown by orders of magnitude. For example,

Chinchilla sees 1.4

trillion words during training--well over 10000 words for every one word a 13 year old child has heard in their entire life.

The goal of this workshop is to incentivize researchers with an interest in pretraining or cognitive modeling to focus their efforts on optimizing pretraining given data limitations inspired by human development. Additionally, we hope to democratize research on pretraining, which is typically thought to be practical only for large industry groups, by drawing attention to open problems that can be addressed on a university budget.

Why <100 Million Words?

Focusing on scaled-down pretraining has several potential benefits:

First, small-scale pretraining can be a sandbox for developing novel techniques for improving data efficiency. These techniques have the potential to then scale up to larger scales commonly seen in applied NLP or used to enhance current approaches to modeling low-resource languages. Second, improving our ability to train LMs on the same kinds and quantities of data that humans learn from, hopefully, will give us greater access to plausible cognitive models of humans and help us understand what allows humans to acquire language so efficiently.

Organization Team

• Leshem Choshen (IBM Research, MIT)

• Ryan Cotterell (ETH Zurich)

• Mustafa Omer Gul (Cornell University)

• Jaap Jumelet (University of Groningen)

• Tal Linzen (NYU)

• Aaron Mueller (Boston University)

• Suchir Salhan (University of Cambridge)

• Raj Sanjay Shah (Georgia Institute of Technology)

• Alex Warstadt (UCSD)

• Ethan Wilcox (Georgetown)

The BabyLM Challenge was previously held as a shared task (2023-2025) and a workshop (2025). At the following link, you can find the last year's call for papers .